The Hype Is Real. The Gap Is Realer.

Everyone in the PXM world is talking about agents right now. Pimcore just shipped experimental MCP Server support, letting large language models connect directly to your product catalog through a standardized protocol. Other PIM vendors are moving in the same direction. The LinkedIn feeds are full of demos: someone opens Microsoft Copilot inside Word, types “give me all red Italian cars with a price under €40K,” and Pimcore returns the exact results from a live catalog.

That is genuinely impressive. It works. And it represents a real shift in how AI systems interact with product data.

But here is the thing - everyone is celebrating the agent reading the PIM. Nobody is asking who fed the PIM in the first place. That is a serious omission.

That question matters more than almost anything else in 2026. And the answer, for most companies, is still: a human. With a spreadsheet. And a lot of patience.

Why Agentic PXM Is Having Its Moment

Pimcore Inspire 2026 on April 14 in Salzburg is being billed as a showcase for Agentic PXM and Studio 1.0 - the first full release of their rebuilt admin interface. The event marks a real inflection point: Pimcore Studio moves from beta to the default way of working with Pimcore, and the platform’s AI direction is front and center. Look, this is not hype for its own sake.

The broader trend is just as pronounced. Commercetools called 2026 the breakout year for agentic commerce, pointing to consumer readiness, maturing LLMs, and new interoperability standards all converging at once. Morgan Stanley predicts nearly half of online shoppers will use AI shopping agents by 2030, accounting for roughly 25% of their spending. Gartner says 40% of enterprise applications will embed task-specific AI agents by 2026, up from low single digits just a couple of years ago.

And Informatica summed up the pitch for Agentic AI PIM as well as anyone: AI agents that can “read a 20-page supplier catalog, identify which data points are relevant for a product record, extract them, structure them correctly and even generate compelling, channel-specific marketing descriptions from those technical specs.”

Sounds amazing. And it is - once the data is already in the PIM.

That conditional phrase is where everything breaks down.

The Question Nobody Is Asking

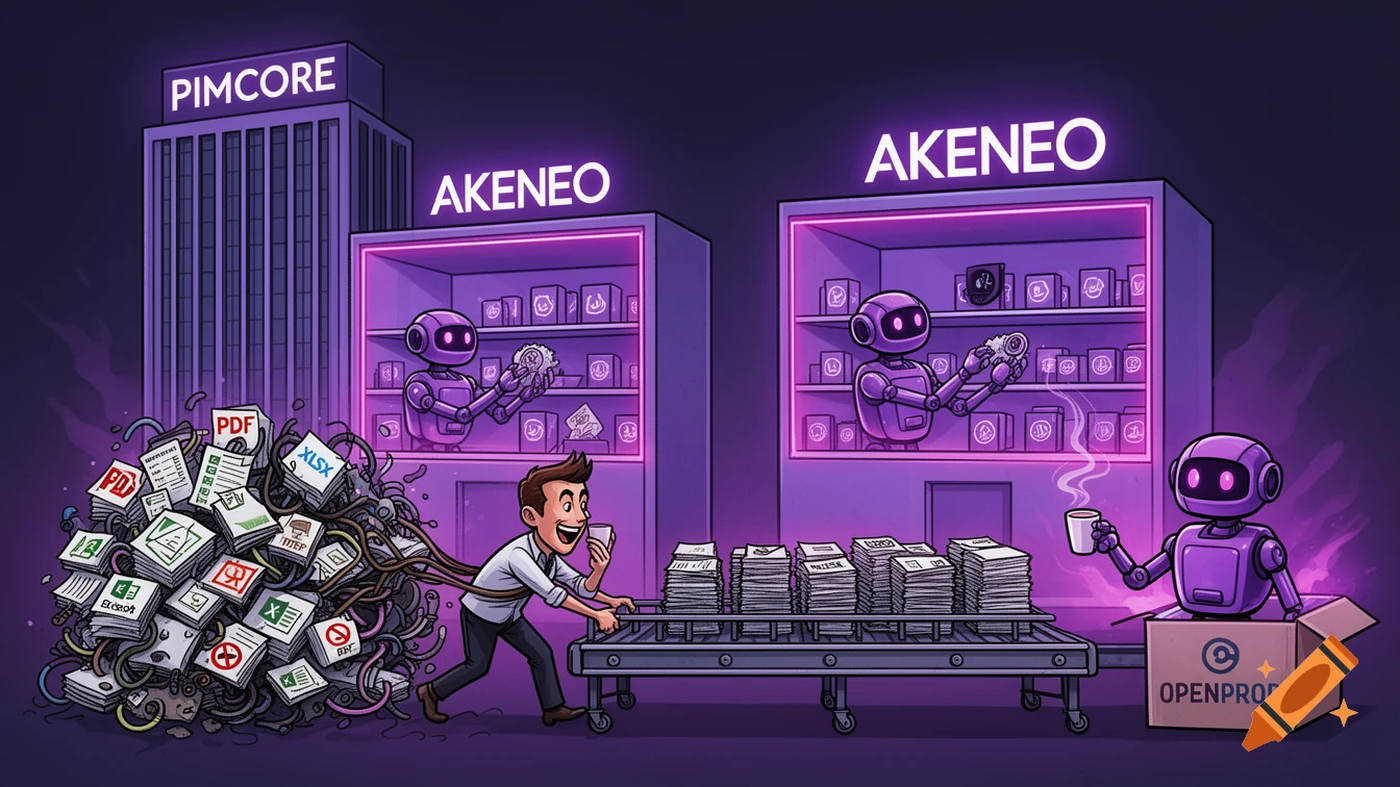

Here is the sequence everyone assumes is already solved: a supplier sends files, files become clean structured data, clean structured data goes into the PIM, agents read the PIM and do clever things. The thing is, that second step - files to clean structured data - is where the whole model collapses in practice.

Based on LemonMind analysis of 70+ implementations, the bottleneck in virtually every PIM project is getting data in, not managing it once it arrives.

Think about what “files” actually means in practice. Honestly, most people outside of implementation work have no idea. Suppliers send:

- PDFs with merged cells and scanned specs from 2009

- Excel sheets where column A is “Product Name” for the first 80 rows, then “SKU” for the next 40, because someone reorganized the template mid-catalog

- Mixed units (some products in mm, others in cm, a few in inches, all in the same file)

- Images embedded inside Word documents

- CSV exports from ERP systems that use internal codes your PIM has never heard of

- Occasionally, a handwritten note photographed on someone’s phone

You cannot send that to an AI agent and ask it to reason about your product catalog. The agent needs the data to already be structured, validated, and mapped to your taxonomy. That is the gap. And right now, most companies are closing it the same way they did in 2010: manually.

The Real Cost of “Getting Data In”

Manual product data entry costs €14,000 per 1,000 products. That number comes from LemonMind’s detailed time-tracking audits across 70+ client projects, and it is the median - not the worst case.

The math is straightforward and almost nobody does it. The median time to onboard a single product - reading the supplier file, mapping attributes to PIM fields, uploading images, validating required fields - is 25 minutes. For 1,000 products, that is 417 hours of pure entry time. Add 20% for real-world conditions (meetings, errors, context switching) and you are at 500 hours. At €30/hour for a mid-level data manager in Europe, that is €15,000 base. Factor in QA rework - manual entry carries a 15-20% error rate, and your QA team will spend 30-40% of their time fixing those mistakes - and the median settles at €14,000 per 1,000 products.

The real kicker is what this looks like at scale. A fashion wholesaler we worked with onboards 1,200 SKUs per season, four seasons a year. That is 4,800 products annually. Their annual spend on manual data entry labor: €67,000. Their Pimcore license: €18,000. They were spending nearly four times more on getting data into the PIM than on the PIM itself - and had never calculated it until we showed them.

That is not unusual. In a survey of 30 LemonMind PIM clients, 68% could not estimate their cost-per-product for onboarding.

Three months of work. €14,000. For 1,000 products. With errors.

Why This Gap Is Catastrophic for Agentic PXM

Here is why this specific problem becomes critical in the agentic era, not just annoying.

Agents amplify. That is kind of the point. A well-configured agent can generate product descriptions, optimize for channels, manage variants, and push updates across your entire catalog faster than any human team. But that amplification works in both directions.

An agent working from clean, well-structured data with consistent attributes and validated values? It compounds your productivity. An agent working from patchy, inconsistent, partially-mapped data? It compounds your errors. Wrong units get propagated across channel outputs. Missing attributes create silent failures in downstream syndication. Inconsistent categorization means your agent’s recommendations do not match what customers actually see.

The commercetools analysis of agentic commerce trends puts it plainly: “AEO (Answer Engine Optimization) becomes essential. Structured data, enriched metadata and clean catalogs determine whether an agent can understand and recommend a SKU.” This is not a nice-to-have. Agents that cannot parse your product data cannot recommend your products. They will recommend a competitor whose data is cleaner.

The MetaRouter analysis adds another dimension: agent-to-agent B2B commerce is emerging, with supplier agents negotiating directly with retailer procurement agents. That entire transaction chain requires structured data at the input. If your supplier data pipeline is still manual, you are already behind for the next generation of commerce infrastructure.

Honestly, the companies racing to deploy Agentic PXM without solving their data intake problem are like Formula 1 teams investing in the world’s best aerodynamicist but still filling the tank by hand with a garden hose.

What the Competitors Are Doing (And Not Doing)

To be fair, this problem is not invisible. Akeneo has its Supplier Data Manager for collecting and validating supplier content. Salsify has its Supplier Experience Management environment for onboarding and governing supplier data. Informatica has announced its CLAIRE Product Experience Agent for extracting data from PDFs and spreadsheets.

These are real product efforts. But look more carefully at the positioning.

Akeneo SDM is primarily a portal - it exposes your data requirements to suppliers and gives them a structured form to fill in. That helps if your suppliers are cooperative and competent. It does not help when a supplier sends you a 47-sheet Excel export from their ERP with custom column names, merged header rows, and six different ways of writing “N/A.” The gap between “here is our template” and “here is the chaos we actually receive” is enormous.

Salsify’s approach is similar - a governed environment for supplier content that works well within the Salsify ecosystem. The question is what happens to the suppliers who do not fit that mold, which is most of them in any European manufacturing or wholesale context.

The competitor approaches solve the compliance problem (“suppliers, please conform to our format”). OpenProd solves the reality problem (“suppliers sent us chaos, let us extract meaning from it anyway”).

That distinction matters enormously when your supplier base includes 80 manufacturers across five countries, each with their own legacy systems, data quality standards, and communication preferences.

The Missing Layer

Look - here is what the technology stack for Agentic PXM actually looks like when you draw it out honestly:

Supplier data (chaos, varied formats, inconsistent quality) ↓ [this gap is where the 3 months live] Structured PIM data (clean, validated, taxonomically consistent) ↓ AI agents (MCP Server, Claude, Copilot, custom workflows) ↓ Customer experiences (product pages, recommendations, B2B catalogs)

Everyone is building at the bottom two layers. The agents are getting smarter. The PIM interfaces are getting cleaner. Pimcore Studio 1.0 is genuinely good.

The top layer - turning supplier chaos into PIM-ready data - needs its own category of solution. We call it AI Product Data Middleware. It sits between your suppliers and your PIM, and it does the work that no human team should be doing manually in 2026: reading arbitrary file formats, extracting structured attributes, mapping to your taxonomy, flagging exceptions, and pushing clean records into the PIM.

OpenProd is that layer. It is the first PIM-agnostic MCP server for product data - meaning it works with Pimcore, Akeneo, Salsify, or any other PIM your stack includes. The pipeline goes from supplier file to structured PIM record in hours, not months. Based on the same cost data above, the comparison is stark: manual onboarding at €14,000 per 1,000 products, AI-assisted with human review at €502.50. That is up to 95% time reduction on data intake.

Not 95% on everything. Specifically on the part that was consuming three months of labor and introducing errors at a 15-20% rate.

What Clean Data at Agent Speed Actually Means

The phrase “agent speed” gets thrown around a lot right now. What it means in practice, for PIM, is this: your AI agents can only move as fast as your data intake pipeline. If that pipeline is still a human with a spreadsheet, your agentic PXM runs at human speed - with the illusion of AI sophistication on top. The thing is, most stacks in 2026 look exactly like that.

Getting to actual agent speed requires three things:

- Automated extraction from arbitrary supplier formats (not just the ones that conform to your template)

- Intelligent mapping to your PIM taxonomy, with confidence scoring and exception handling

- Human review on the exceptions only - not on every record

When those three things work, the 3-month supplier onboarding becomes a 2-day process. The €14,000 per 1,000 products becomes €500. And the agent sitting at the bottom of your stack - the one generating descriptions, optimizing channels, syndicating to retail partners - has clean data to work with from day one.

That is when Agentic PXM actually works. Not before.

Come See It Live

We are presenting a masterclass at Pimcore Inspire 2026 - “From Excel Hell to AI Onboarding” at 16:15 on April 14 in Salzburg. It is a live demonstration of exactly what this article describes: supplier files going in one end, structured PIM-ready data coming out the other, at a fraction of the cost and time.

Honestly, it is the most important 25 minutes of the day if you are running a PIM that is still fed by humans. If you are building with Pimcore and thinking seriously about Agentic PXM, the data intake question is the one you need to solve first. Everything else depends on it.

Details and registration at openprod.io/inspire.