The Model Context Protocol is quietly becoming the most important infrastructure shift in PIM since headless architecture. Most PIM vendors haven’t noticed yet. A few have. Here’s why it matters — and why acting late will cost you.

The Problem With How We Move Product Data Today

If you’ve ever integrated a PIM system with anything — an ERP, a marketplace, a supplier portal — you know the drill. REST APIs, custom connectors, middleware, mapping tables, transformation scripts. For every new data source, your team builds another pipeline. For every schema change, someone rewrites the ETL job.

Across 70+ Pimcore implementations, LemonMind has seen the same pattern: companies spend 40-60% of their total PIM project budget on integrations alone. Not on the PIM itself. Not on data modeling. On moving data from point A to point B.

That’s not a technology problem. That’s an architecture problem. And MCP is the first credible answer.

If you’ve been dealing with the real cost of manual product data entry, you already know how expensive these integration pipelines get. MCP changes that equation fundamentally.

What Is MCP and Why Should PIM Professionals Care?

Model Context Protocol (MCP) is an open standard — originally developed by Anthropic — that lets AI agents interact with external systems through a unified, tool-based interface. Think of it as a universal adapter between AI and your business data.

Instead of building custom API integrations for every system, an MCP server exposes structured tools that any AI agent can discover, understand, and call. The agent doesn’t need to know your API documentation. It reads the tool definitions, understands what’s available, and acts.

For product data management, this changes everything:

- No more custom connectors per data source. An MCP-enabled PIM exposes tools like

search_products,update_attributes,suggest_categories. Any agent — whether it’s your internal automation or a third-party tool — can call them. - No more token-wasting trial and error. Traditional AI integrations with APIs often burn thousands of tokens figuring out endpoints, parameters, and authentication. MCP tools are self-describing. The agent calls the right tool on the first try.

- No more rigid, one-way data flows. MCP enables bidirectional, context-aware communication. An agent extracting data from a supplier PDF can query your PIM’s data model in real time, match attributes dynamically, and push clean data — all in one flow.

The Race Is Already On

In the last 30 days, three significant moves happened in the PIM-MCP space:

Struct PIM announced MCP server support on their 2026 roadmap, explicitly positioning it as “secure AI agent interaction with PIM data.” They see the same thing we see: agents are the new integration layer.

Sales Layer went further — they’ve already deployed a live MCP server with read-only and read-write modes, ISO 27001 compliance, and OAuth 2.0 authentication.

Pimcore is building specialized MCP endpoints as part of their Agent Bundle strategy — providing partners with MCP services that are fast, efficient, and purpose-built for agent-to-PIM communication. Their Copilot documentation already outlines multi-step agentic workflows.

This is not a theoretical future. This is Q1 2026.

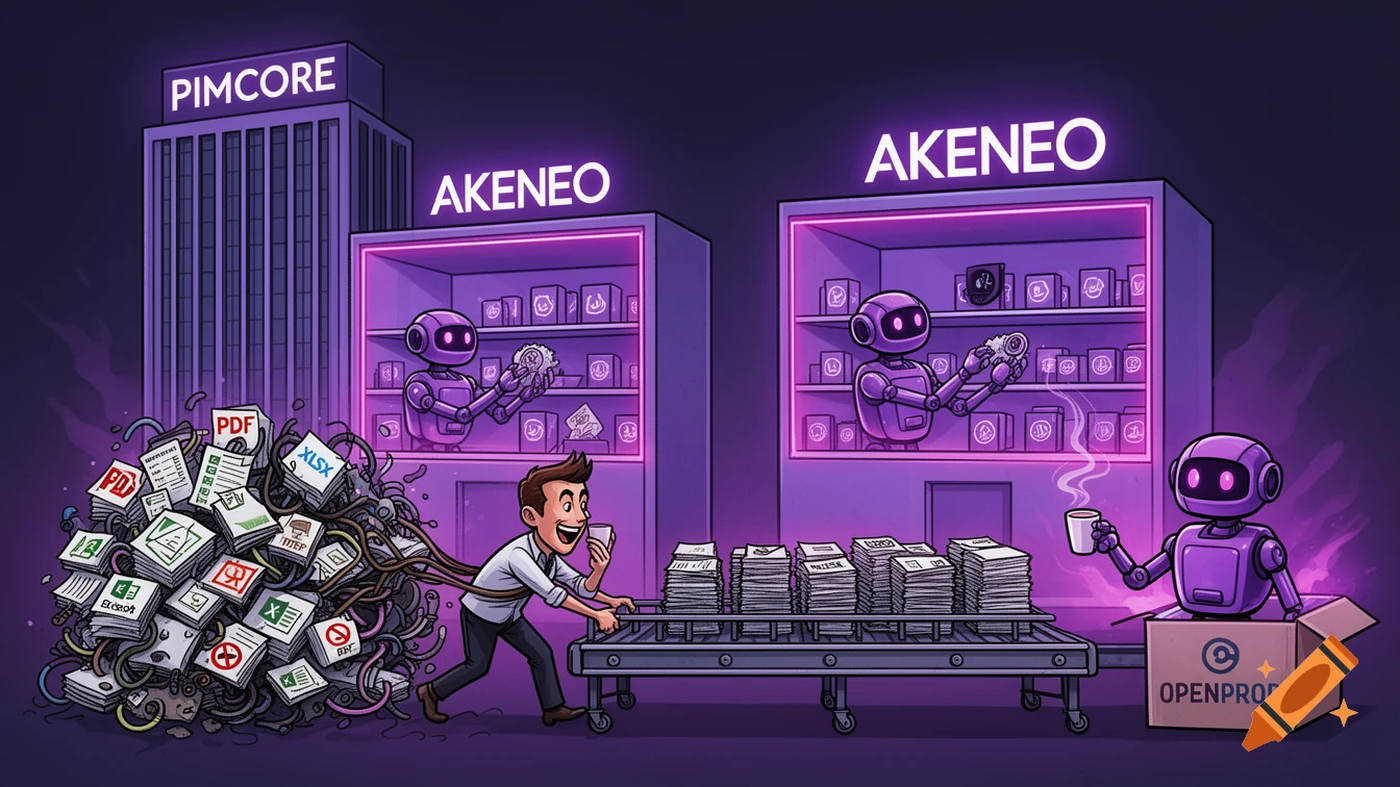

Meanwhile, Akeneo — the largest pure-play PIM vendor — has shown no public MCP strategy. Their Supplier Data Manager uses traditional API-based integration. That gap will widen fast.

What This Means for Product Data Onboarding

Here’s where MCP gets really interesting — and where openProd.io’s AI extraction pipeline lives.

Product data onboarding is the hardest integration problem in PIM. Supplier data arrives as PDFs, Excel spreadsheets, XML feeds, images with embedded text. It’s messy, inconsistent, and different for every supplier. If you’ve read about the three bottlenecks blocking your PIM ROI, you know this is where most implementations stall.

Traditional ETL-based onboarding requires:

- Manual analysis of the source file

- Custom mapping to the PIM data model

- Transformation rules for data cleaning

- Testing and validation

- Repeat for every new supplier

This process takes weeks to months. It costs €14K per 1,000 products in manual labor alone (LemonMind data, 2025).

With MCP-enabled AI agents, the flow looks different:

- Agent reads the source file (PDF, Excel, whatever)

- Agent queries the PIM’s MCP server: “What’s your data model? What classes exist? What attributes are required?”

- Agent maps and transforms data in real time, using the PIM’s own schema as context

- Agent pushes clean, validated data through MCP tools — no custom connector needed

- Human reviews and approves

The key difference: the agent understands both sides of the equation simultaneously. It doesn’t need a pre-built connector. It doesn’t need mapping tables maintained by a developer. It reads the source, reads the target, and figures out the bridge.

This is exactly what openProd.io does. We were the first product data onboarding tool to ship an MCP server — and now we’re integrating with Pimcore’s MCP endpoints to create a fully agent-to-agent data pipeline. No traditional API integration required. See how this compares to Pimcore’s native approach.

The Strategic Question for PIM Buyers

If you’re evaluating PIM systems in 2026 — or auditing the ROI of your current one (here’s how to build that business case) — add this to your RFP:

“Does your platform expose an MCP server? What tools are available? Can third-party AI agents interact with your data model programmatically?”

If the answer is no — or “we’re considering it” — you’re looking at a system that will require expensive custom integration for every AI-powered tool you want to use in the next 2-3 years.

The vendors building MCP today are the ones who understand that the integration layer is shifting from developer-built pipelines to agent-driven orchestration. This isn’t about replacing developers — it’s about letting them focus on business logic instead of plumbing.

The Numbers Behind the Shift

Let’s make this concrete.

Traditional API integration cost for a mid-size PIM project:

- Custom connector development: €15,000 - €40,000

- Maintenance per year: €5,000 - €12,000

- Time to first data sync: 4-8 weeks

- Per-source marginal cost: €3,000 - €8,000

MCP-based integration cost:

- MCP server setup (if the PIM supports it): included

- Agent configuration: hours, not weeks

- Time to first data sync: same day

- Per-source marginal cost: near zero (agent adapts dynamically)

For a company onboarding data from 20 suppliers per year, the difference between traditional API integration and MCP-based orchestration is €60,000 - €160,000 annually. That’s not a rounding error. That’s a headcount.

Your MCP Readiness Checklist

Before your next PIM evaluation or vendor review, assess where you stand:

- Current integration count: How many custom connectors are you maintaining today? ____

- Annual integration cost: What do you spend on connector maintenance per year? €____

- Time to onboard a new supplier: How many weeks from first file to clean data in PIM? ____

- Vendor MCP support: Does your PIM expose MCP endpoints? Yes / No / Planned

- AI agent readiness: Can your current tools interact with AI agents without custom code? Yes / No

If you answered “No” to questions 4 and 5, and your integration costs from questions 1-3 are growing — the ROI of switching to MCP-native tools is already positive.

What Comes Next

MCP is still early. Not every PIM supports it. Not every AI agent speaks it fluently. But the trajectory is clear:

- 2026: First movers ship MCP servers (Struct, Sales Layer, openProd.io, Pimcore via Agent Bundle)

- 2027: MCP becomes a standard RFP requirement for enterprise PIM evaluations

- 2028: PIM vendors without MCP support lose deals to those who have it — just like headless architecture did to monolithic PIMs five years ago

The companies that build MCP into their stack now will have a structural cost advantage in data onboarding, supplier integration, and AI-powered enrichment. The ones that wait will spend the next three years building custom connectors that the market has already moved past.

The Bottom Line

The PIM industry is about to go through the same architectural shift it went through with headless. The vendors who embraced APIs and composable architecture early won. The ones who clung to monolithic, all-in-one approaches lost market share.

MCP is the next version of that shift. It’s not about whether AI agents will become the primary integration layer for product data. It’s about when. And the answer is: now.

The question for your organization is simple: will you build for the agent-driven future, or will you keep paying developers to maintain integration pipelines that AI can handle in seconds?

Your CFO will have an opinion on that.

Sources & Further Reading

- Anthropic — Model Context Protocol announcement and specification

- Struct PIM — 2026 Roadmap: MCP server for secure AI agent interaction

- Sales Layer — MCP Server for PIM: live endpoint documentation

- Pimcore — Copilot documentation: multi-step agentic workflows

- Akeneo — Supplier Data Manager changelog and updates

- LemonMind — Implementation cost data from 70+ Pimcore projects (2019-2026)

- openProd.io — Developer Hub: REST API, MCP Server, Webhooks

Cost figures are based on LemonMind’s internal project data across 70+ PIM implementations for mid-size European enterprises (2019-2026). Individual results vary based on catalog complexity, number of suppliers, and existing infrastructure.