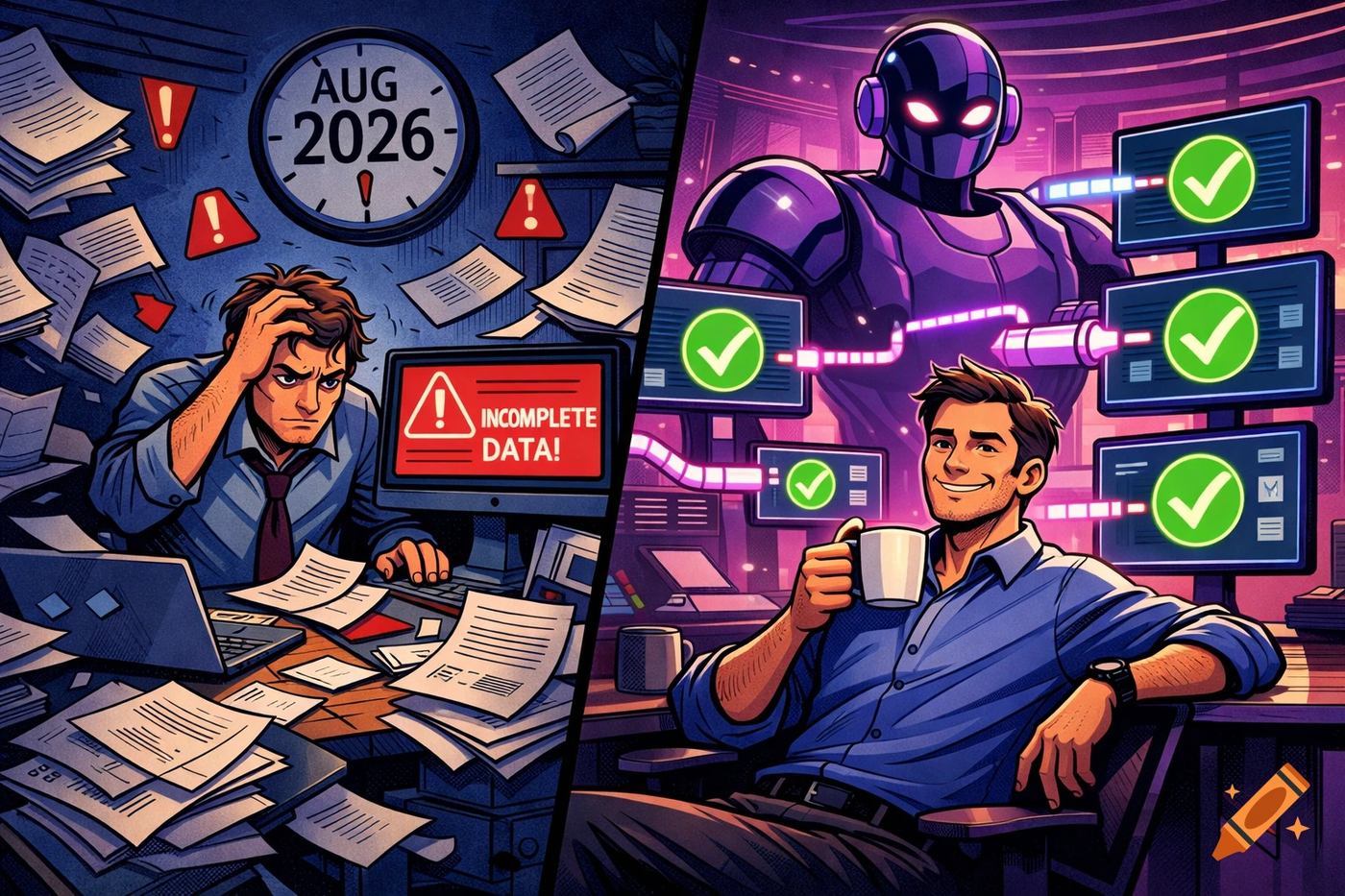

If your supplier onboarding still runs on emailed XLSX attachments, you are not running a process. You are running a lottery.

I have nothing against spreadsheets. I have a lot against what spreadsheets do to product teams.

Because the moment you rely on Excel as your supplier onboarding system, you silently accept three things:

- You will keep paying smart people to do dumb copy paste work.

- Your PIM will get blamed for problems it did not create.

- Your AI initiatives will stall, because an agent is only as good as the product data you feed it.

This is a contrarian take, but it is also a practical one: most companies do not have a Pimcore problem. They have a supplier onboarding pipeline problem.

Pimcore can import, validate, and model data. The mess usually happens before the data ever touches Pimcore.

This article is a case study style playbook we will show live at Pimcore Inspire 2026 in Salzburg. And yes, we will move fast.

How do you onboard supplier product data into Pimcore without losing your weekend

First, let’s call the thing by its name: supplier onboarding is a throughput problem.

You can have the cleanest Pimcore data model on Earth, and still spend weeks waiting for “the final version” of an Excel file. Been there, done that, got the headache.

A workable onboarding flow has three outcomes:

- You can accept messy supplier inputs without breaking.

- You can normalize into your schema predictably.

- You can import into Pimcore repeatedly, without someone babysitting the process.

If you have to hand-map columns every time, chase missing attributes by email, and re-run imports because the supplier changed headers, you do not have a pipeline. You have a recurring incident.

Rhetorical question time: if the pipeline only works when your best product person is online, is it really a pipeline?

Why does supplier onboarding still feel like Excel archaeology in 2026

You know the scene.

A supplier sends an XLSX file that looks fine at first glance. Then you open it and the usual suspects show up:

- column headers not on the first row

- multi-row headers that bury the actual data

- required columns missing

- columns in a different order than last time

- merged cells that turn a table into modern art

Flatfile describes this exact class of failures: columns that do not match expected field names, headers not on the first line, missing required columns, and merged cells that make source-to-target mapping harder. That is not “edge case” behavior, that is Tuesday. https://flatfile.com/blog/10-data-onboarding-problems-cs-leaders-face-and-how-to-fix-them/

Now add the hidden coordination tax:

- one person tries to map fields

- another fixes values

- a third emails the supplier for missing attributes

- then mapping breaks because the file layout changed again

That loop can run for weeks. Across hundreds of suppliers, you have effectively built a data factory that produces stress. Not great.

Pimcore Data Importer supported formats mapping preview cron and logging

Pimcore’s Data Importer is a strong baseline. It supports importing from csv, xlsx, json, and xml, lets you define mappings with simple transformations, and gives you a preview before running the import. https://docs.pimcore.com/platform/2024.2/Data_Importer/

It also supports running imports directly in Datahub or on a regular basis via cron definitions, with status updates and extensive logging. https://docs.pimcore.com/platform/2024.2/Data_Importer/

In other words: Pimcore is not the bottleneck.

But here is the uncomfortable truth. The Data Importer assumes your input has a shape you can map reliably. When suppliers keep drifting the schema, your mapping becomes a living document. That is where onboarding time goes to die.

So the real question becomes: how do you make the input stable enough that Pimcore’s importer can do its job without constant babysitting?

What is AI Product Data Middleware and why it belongs between suppliers and Pimcore

This is the missing layer most stacks do not talk about.

A PIM is where product data should end up. Onboarding is where product data should get cleaned, normalized, validated, and made predictable.

An AI Product Data Middleware sits between suppliers and Pimcore.

It does three boring things extremely well:

- reads ugly supplier files

- transforms them into your schema

- pushes clean data into Pimcore repeatedly, without drama

Boring is good here. Every manual touch point adds latency and risk. Every “quick fix” becomes tomorrow’s operational debt.

Based on LemonMind analysis of 70+ implementations, the highest-performing teams treat onboarding like a pipeline, not a project.

Colloquial version: if your onboarding depends on heroics, you are already in trouble.

Model Context Protocol MCP and how it changes Pimcore workflows for agentic PXM

You have probably heard people throw around “MCP” like it is a magic spell. Sometimes it is hype. Sometimes it is infrastructure.

Anthropic introduced the Model Context Protocol (MCP) as an open standard for connecting AI assistants to the systems where data lives, enabling secure two-way connections via MCP servers and MCP clients. https://www.anthropic.com/news/model-context-protocol

Pimcore’s Simple REST Datahub provides an MCP server endpoint that enables AI agents and LLMs to directly access and query Pimcore data, and Pimcore explicitly marks this MCP feature as experimental. https://docs.pimcore.com/platform/Datahub_Simple_Rest/MCP_Server

Why do I care?

Because once an agent can query product objects, completeness status, and import outcomes, you can finally stop treating your product team like a human router.

Example prompts that become realistic:

- “Show me products missing GTIN in the DE channel.”

- “List suppliers with recurring unit errors.”

- “Draft a supplier email asking only for the fields that block publication.”

Rhetorical question time again: if your team spends more time locating problems than fixing them, what are you actually paying for?

What would a 48 hour supplier onboarding sprint into Pimcore look like

Let’s get concrete.

If you want to onboard supplier data into Pimcore in 48 hours, you need a sprint structure. Not a “we will see how it goes” structure.

Here is the playbook we will demonstrate.

Day 1, morning: define the target schema

- pick the minimal set of attributes needed to publish

- define required vs optional fields

- define normalization rules for units, locales, and formats

Day 1, afternoon: run a reality check on the supplier file

- detect structural issues like merged headers, multi-row tables, hidden sheets

- identify quality issues like missing identifiers, inconsistent units, invalid categories

Day 1, late afternoon: generate a mapping draft

- map supplier columns to your Pimcore object model

- flag ambiguous fields with confidence scores

Day 2, morning: run transformation and validation

- normalize units and formats

- validate required fields

- attach assets where possible

Day 2, afternoon: push into Pimcore and verify

- create or update objects

- log every transformation

- spot-check a sample and sign off

If you are thinking “that sounds like a lot,” you are right. But it is still less work than a month of email tennis. No kidding.

Up to 95 percent time reduction in product onboarding without playing games with the numbers

Let’s talk about the number everyone wants.

OpenProd is an AI Product Data Middleware built specifically for the onboarding layer.

In projects we have tracked, teams have seen up to 95% time reduction on repetitive onboarding work when the pipeline is configured and suppliers follow a predictable submission pattern. That qualifier matters.

If your supplier sends you a new file layout every week, no system can save you. If you standardize the flow and automate the boring parts, the manual effort collapses fast.

And yeah, once it clicks, it feels almost unfair.

Why supplier onboarding portals still miss the pipeline problem

I am going to be a bit annoying here.

A lot of platforms solve onboarding by building a supplier portal. That helps, but it is not the whole story.

Take inriver’s Supplier Onboarding site: suppliers log in to upload data files (like .xls, .xlsx, or .csv) and media, with validation and optional automatic import after validation. https://community.inriver.com/hc/en-us/articles/360012180234-Inriver-Supplier-Onboarding-site-for-suppliers

That is good.

But here is the catch: portals are still user interfaces.

Your bottleneck is often not the UI. It is the transformations, schema drift, downstream workflows, and the fact you still need a clean contract into Pimcore.

So the practical question is: do you want another portal, or do you want a middleware layer that normalizes everything into Pimcore with predictable contracts?

What should you measure to stop onboarding from being a black hole

If you do not measure onboarding, you will keep guessing. Simple as that.

Here are the metrics I track when I want onboarding to stop being a mysterious cost center:

- time to first valid import

- percentage of products passing validation on first attempt

- number of supplier back-and-forth cycles per catalog

- cost per SKU onboarded

- time from “file received” to “published in channel”

This is the stuff that makes a CFO stop rolling their eyes.

And honestly, it also keeps your team sane, because you can finally point to a system instead of telling war stories.

How to start fixing supplier onboarding if you are stuck in spreadsheets

Do not try to boil the ocean.

Pick one supplier, one category, one channel.

Run a two-day onboarding sprint. Document every failure mode. Then build your pipeline around reality, not wishful thinking.

That single sprint teaches you more than three months of steering committee calls. No joke.

And if you want a head start, it is worth reviewing how Pimcore Data Importer supports mappings, previews, cron-based runs, and logging, because those primitives are what you want to leverage once your input is stable. https://docs.pimcore.com/platform/2024.2/Data_Importer/

See the live demo at Pimcore Inspire 2026

If you are heading to Salzburg for Pimcore Inspire, come to the OpenProd masterclass “From Excel Hell to AI Onboarding”.

We will walk through a real onboarding sprint, show how the AI Product Data Middleware layer fits into Pimcore, and share the exact checks, mapping steps, and validation logic.

Reserve your spot: https://openprod.io/inspire

Internal reading if you want more context before the event:

- https://openprod.io/blog/pimcore-studio-openprod-mcp-agent-ready-pim-stack/

- https://openprod.io/pim-roi-calculator/

- https://openprod.io/solutions/

Sources and Further Reading

- https://openprod.io/inspire

- https://docs.pimcore.com/platform/2024.2/Data_Importer/

- https://docs.pimcore.com/platform/Datahub_Simple_Rest/MCP_Server

- https://www.anthropic.com/news/model-context-protocol

- https://flatfile.com/blog/10-data-onboarding-problems-cs-leaders-face-and-how-to-fix-them/

- https://community.inriver.com/hc/en-us/articles/360012180234-Inriver-Supplier-Onboarding-site-for-suppliers