On April 14, we walked into Salzburg with one question: does the “missing layer” story we have been telling for six months resonate when we put it in front of the room that matters most? By the end of the day, we had an answer. A full masterclass room, a queue of booth conversations that ran past the coffee break, and enough follow-up meetings on the calendar to keep the team busy well into May. This is what we heard, and what it says about where product data onboarding is heading in 2026.

The thesis we brought to Salzburg

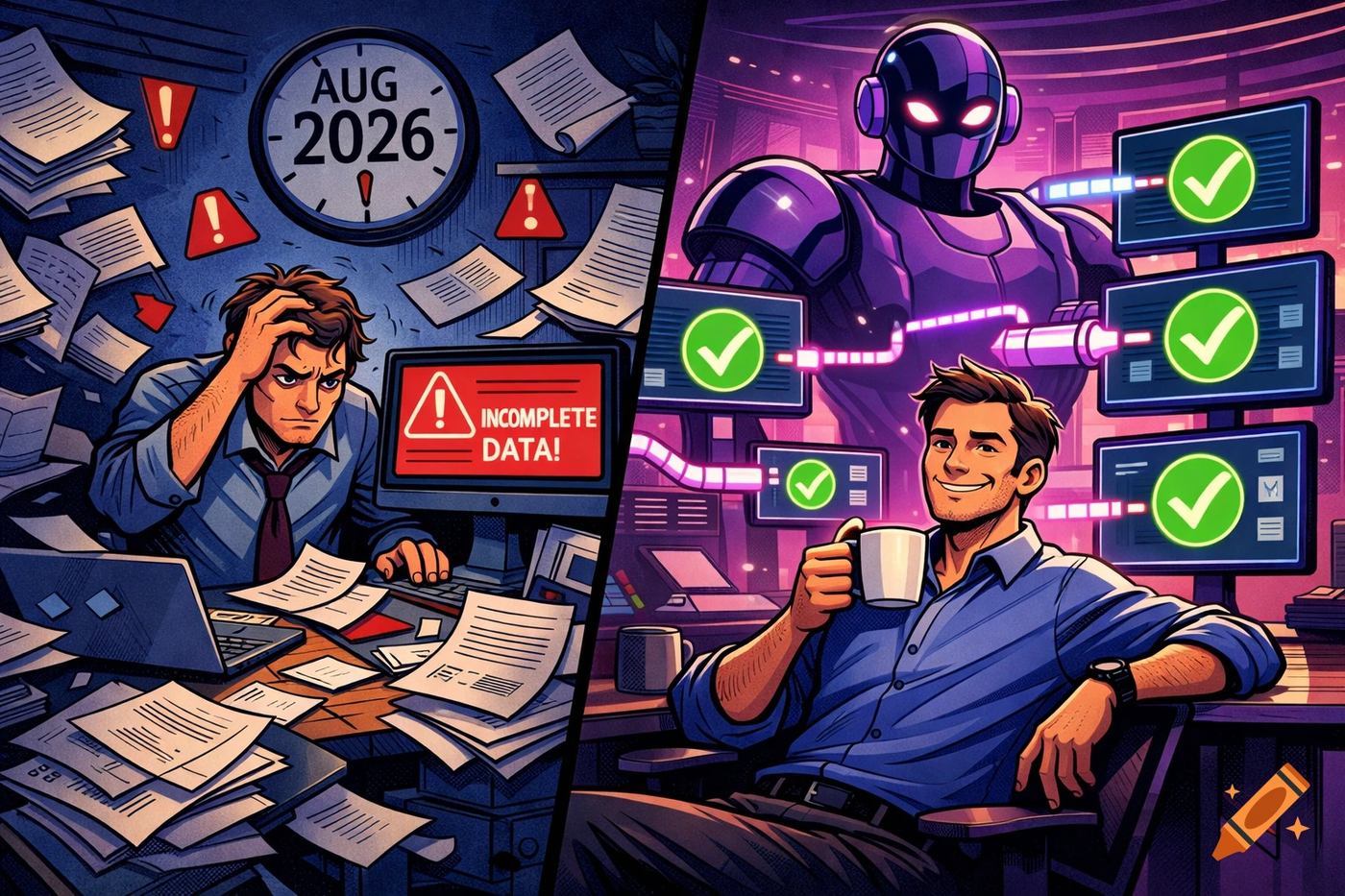

The problem we keep running into, across seventy-plus Pimcore implementations, is not the PIM. The PIM works. What is broken is what happens before the data ever gets to the PIM. Supplier PDFs, half-structured Excel files, XML feeds with thirty variants of the same attribute name. Clean that up by hand and you spend three to six months turning raw supplier files into something a PIM can actually ingest. A team of product data specialists burns through weeks of manual mapping, normalization, and quality checks for every new supplier or category.

We brought a straightforward proposition to Inspire: there is a layer missing between the supplier and the PIM, and it needs to be AI-native, PIM-agnostic, and built with confidence scores so humans stay in control. That is openProd.

The masterclass was officially listed in the Pimcore program as “From Product Data Mess to PIM-Ready in 2 Days.” We had forty-five minutes. We used them to walk through the actual pipeline: PDF ingest, AI attribute extraction, classification mapping, confidence scoring, human review, and push to the target PIM. No fluff, no slideware, no stock “AI transforms everything” talking points.

A full room is a data point, not a victory lap

The room filled up before we started and stayed filled. Zero empty seats. We opened with one number: a fifteen-page supplier PDF parsed into 128 structured attributes for roughly two euros of compute. The room leaned in. That is the moment you can feel whether a story lands or not, and this one landed.

We do not take full rooms as a win by themselves. A full room at an industry event usually means the topic is on people’s minds. What mattered was what happened in the thirty minutes after the masterclass: attendees came to the booth asking not only about openProd, but about LemonMind’s advisory capacity for finishing existing Pimcore implementations that had stalled on data quality. That is a signal. Product data onboarding is not a niche pain. It is the rate-limiting step for a real percentage of PIM rollouts in Europe right now.

A working estimate from our implementation data: a mid-market catalog of 1,000 SKUs brought in from messy supplier files costs roughly fourteen thousand euros in loaded human labor when done manually. Scale that across suppliers, seasons, and categories, and you get the real number that is blocking PIM time-to-value. When we put that figure on the screen, the heads that nodded were not the heads of vendors. They were the heads of the people who sign those timesheets.

The validation we did not plan for

The conversations we did not expect to have in Salzburg turned out to be the most important ones. Buyers came to the booth describing the exact same problem in different industries. A global manufacturer running a migration of more than ten ERP systems across regions, blocked on supplier data onboarding across categories. A lean retail operation with a very large Pimcore catalog and a steady stream of seasonal supplier data to process. Several mid-market manufacturers whose engineering teams had come specifically to evaluate how to move onboarding work off human specialists and into an AI layer with human review.

These are not demo tire-kickers. These are buyers describing a problem they have already budgeted against.

Two patterns stood out. First, the onboarding problem is not a long-tail complaint from a handful of verticals. It shows up across manufacturing, distribution, B2B commerce, and retail, in Pimcore, Akeneo, and Ergonode deployments alike. Second, when buyers see a working pipeline on real supplier files, the questions change in a specific way. They stop asking “does this work” and start asking “how does this handle my fifth edge case.” That shift is the clearest signal we had that the category is ready.

What the numbers did in the room

A few data points did heavier lifting than the rest. In rough order of impact:

The 2-euro extraction cost on a fifteen-page supplier PDF. This one always gets the room. When you tell a PIM Manager that the compute cost to extract 128 structured attributes from a supplier document is less than a lunch, the math of “how many files could we process if we stopped fighting the backlog” starts to feel tractable.

The up to 95% time reduction on the manual mapping step. For a room full of people who have personally sat through sprint after sprint of supplier spreadsheet cleanup, that number is not aspirational, it is a promise they can pressure-test. And they did. The questions after the demo were not “can this really work” questions. They were “how does this handle my fifth edge case” questions. That is the shift you want to see.

The confidence scores on every field. This is the number that buyers at data-sensitive companies care about most. AI is not making decisions on your production catalog. AI is proposing, surfacing confidence, and handing the final call to a human reviewer. For regulated categories like building materials, electrical, or automotive parts, that workflow is non-negotiable.

The PIM-agnostic architecture. Buyers we spoke with are not choosing between onboarding vendors. They are choosing between “stay locked into my current PIM’s feature roadmap” and “add an AI layer that gives me flexibility across the PIM I already run.” The second path is where budget is starting to move.

What we heard from developers

Inspire also gave us a closer look at where the developer conversation is going. Technical attendees kept circling back to the same questions: how do we plug openProd into agent workflows, how do we call it from the tools our teams already use, and how do we keep the onboarding pipeline composable instead of locked behind a single vendor’s UI.

The pattern in those conversations was consistent. Developer buyers do not want another closed system. They want an onboarding layer that behaves like infrastructure: callable, scriptable, and interoperable with whatever agent stack their organization ends up standardizing on. That shaped more than a few items on our roadmap list for the weeks ahead.

What we are doing with the signal

We left Salzburg with more ideas than we can ship in a quarter, and a calendar full of follow-up meetings with the people we met at the booth and after the masterclass. The next several weeks are going to be loud. Scoping calls on real supplier files. Deeper conversations with buyers whose migrations are actively blocked on data quality. Technical working sessions with teams that want to see how openProd fits their specific stack.

What that means for the product: the roadmap backlog got longer, not shorter, and the prioritization conversation just became more interesting. Every buyer conversation at the event ended with some version of “how do we stay in control.” The confidence score workflow answers that, and it is going to get dedicated investment in the coming release cycles. Beyond that, we are letting the post-event pipeline tell us where the sharpest pain sits before we commit publicly to what ships next.

What this means for you

If you are running a PIM implementation right now and supplier data onboarding is your rate-limiting step, you are not alone. It is the most common reason Pimcore rollouts slip, and it is the most common reason product teams at mid-market and enterprise companies miss their launch windows. The honest answer most agencies give clients is “plan for three to six months.” That honest answer is no longer the only answer.

The less obvious piece, and the piece that Inspire confirmed for us, is that this is not a tooling preference anymore. It is a margin question. If your team is still burning specialist hours on manual supplier file cleanup while your competitors are moving that work into an AI layer with human review, you are not just slower. You are more expensive per SKU onboarded, per category launched, per region expanded. That gap compounds.

The layer is here. It is PIM-agnostic. And based on what a full room told us in Salzburg, it is going to be one of the most discussed architecture changes in product data for the rest of 2026.

If you want to see it run on your own supplier files, book a demo. We will walk through one of your real files end-to-end, score every extracted attribute, and push the result to whichever PIM you are running today. Forty-five minutes, your data, no slideware.