Forrester published its Data Quality Solutions Wave in Q1 2026 and the conclusion was blunt: static validation is dead. Gartner’s 2026 Magic Quadrant for Augmented Data Quality went further - renaming “AI/ML Development” to “Analytics and AI readiness” and making observability a mandatory evaluation criterion.

Both reports point to the same problem: most organizations still measure data quality like it’s 2019. Fill rates. Completeness percentages. Green dashboards.

And then an AI agent tries to use that data and chokes.

I’ve seen this in 70+ PIM implementations. The dashboard says 95% complete. The product data team celebrates. Two weeks later, someone connects an MCP server or plugs the catalog into an agentic commerce workflow, and nothing works. Not because data is missing - but because the data that’s there is wrong in ways completeness metrics don’t capture.

Look, the PIM industry needs a better measurement framework. One that answers the question that actually matters in 2026: can a machine consume this data and act on it?

Why data completeness is not a data quality metric

Completeness is the most popular metric in PIM because it’s the easiest to calculate. Count the filled fields, divide by total fields, show a percentage. It feels scientific. It looks great in quarterly reviews.

It’s also almost meaningless.

Here’s a real example from a client engagement last year. A furniture distributor had 97% field completeness across 12,000 SKUs. Their PIM dashboard was solid green. But when we connected their catalog to an AI-powered product matcher for marketplace syndication, the match rate was under 40%.

Why? Because:

- The “material” field contained free-text entries like “wood, various types” instead of standardized taxonomy values

- Dimensions were stored as strings (“approx. 120x80”) rather than structured numeric fields with units

- Color values mixed naming conventions - some products used “Midnight Blue,” others used “#191970,” others just said “dark”

- Product descriptions were copy-pasted from supplier catalogs with inconsistent formatting and language

Every field was technically filled. Every field was operationally useless for machine consumption.

Akeneo’s SDM team seems to get this partially right. Their March 2026 AI mapping update introduced confidence scoring - only showing suggestions at 70% or above. That’s a step forward because it acknowledges that data quality is probabilistic, not binary. But confidence on the mapping step doesn’t help if the underlying data itself is semantically broken.

So what should you actually be measuring?

Five product data metrics that predict AI agent success

After running onboarding projects where AI agents are the primary data consumer - not humans browsing a webshop - we’ve landed on five metrics that actually correlate with downstream success. None of them appear on a standard PIM dashboard.

1. Semantic accuracy rate

This measures whether field values mean what they’re supposed to mean, not just whether they exist. A color field containing “see image” has zero semantic accuracy. A material field containing “other” has near-zero semantic accuracy. You calculate this by sampling SKUs and validating whether the value in each field would allow a machine to make a correct classification or comparison decision.

Target: above 90% semantic accuracy before connecting any agentic workflow.

2. Taxonomy adherence score

How many attribute values match your controlled vocabulary vs. free-text entries? Free text kills machine processing. If your PIM allows users to type “Stainless Steel,” “stainless steel,” “SS,” and “Inox” in the same material field, you have a taxonomy adherence problem. An AI agent processing a purchase decision needs one canonical value - not four variations of the same thing.

Target: above 95% taxonomy adherence across core product attributes.

3. Structural consistency index

Are similar products described in the same structural format? If one chair has dimensions as “W80 x D60 x H90 cm” and another has “800mm width, 600mm depth, height 900mm,” a human can parse both. A machine matching these for comparison shopping will fail or produce garbage.

This metric checks whether products in the same category follow identical field formatting patterns.

Target: above 85% structural consistency within each product category.

4. Data freshness score

When was each product record last validated - not last modified, but last confirmed as accurate? A record modified three years ago might still be correct. But you don’t know. And neither does an AI agent building a procurement recommendation.

The Gartner 2026 MQ explicitly calls out monitoring production AI pipelines as a key evaluation criterion. That means continuous validation, not annual data audits.

Target: no product record older than 90 days without revalidation.

5. Machine-readability ratio

What percentage of your product attributes are structured (typed fields with units, enumerations, or validated formats) vs. unstructured (free-text blobs)? The Forrester Wave Q1 2026 explicitly notes that “multimodal data support expands AI readiness” - platforms need to handle structured, semi-structured, and unstructured data. But your goal should be moving as much product data as possible into structured formats.

Target: above 80% structured fields for any catalog connected to AI workflows.

| Metric | What it measures | Standard PIM tracks this? | Target |

|---|---|---|---|

| Semantic accuracy | Values mean what they should | No | Above 90% |

| Taxonomy adherence | Controlled vocab vs free-text | Partially | Above 95% |

| Structural consistency | Same format within categories | No | Above 85% |

| Data freshness | Last validation date | Rarely | 90-day max |

| Machine-readability | Structured vs unstructured ratio | No | Above 80% |

Is your PIM dashboard showing any of these? Probably not. And the gap between what you measure and what AI agents need is where revenue quietly disappears.

We covered the operational side of supplier data validation in our supplier data quality audit guide - but that piece focused on pre-migration hygiene. These five metrics apply continuously, not just during onboarding.

How to audit product data for machine readability

The operational workflow for measuring these metrics is different from a standard data quality audit. Here’s the process we run with clients before connecting any AI-powered workflow to their PIM.

Step 1: Sample, don’t scan everything. Pull 200-500 SKUs across your top product categories. Trying to audit 50,000 products manually is a waste of time - and actually, scratch that, it’s not even possible at that scale without automation.

Step 2: Run semantic validation. For each sampled product, check whether the attribute values would allow a machine to make correct decisions. The test is simple: could you write a programmatic rule that correctly interprets this value? If the answer is “only with human judgment,” the field fails semantic accuracy.

Step 3: Map taxonomy gaps. Export all unique values per attribute field. Count how many unique strings exist for what should be a controlled list. If your “material” field has 340 unique values across 5,000 products, you have a taxonomy problem. Most fields should have fewer than 50 canonical values.

Step 4: Check structural patterns. Group products by category and compare field formatting. Flag any category where the same attribute uses more than two formatting patterns. Dimensions, weights, and technical specifications are the usual offenders.

Step 5: Score and prioritize. Calculate each of the five metrics at the category level. Focus remediation on categories that are closest to being connected to AI workflows - your highest-revenue SKUs or the products going through agentic commerce channels first.

This entire audit should take 2-3 days for a catalog of 10,000 SKUs using semi-automated tooling. Compare that to the 6-8 weeks most organizations spend on traditional data quality projects that measure… completeness.

If you want the full pre-audit playbook, our supplier data audit guide walks through the operational steps in detail. The five-metric framework above is what you layer on top of that foundation.

What poor data quality actually costs in the agentic era

The cost model for data quality failures has changed. In 2020, bad product data meant a customer saw the wrong specification and maybe returned the product. Annoying. Manageable.

In 2026, bad product data means an AI agent skips your catalog entirely.

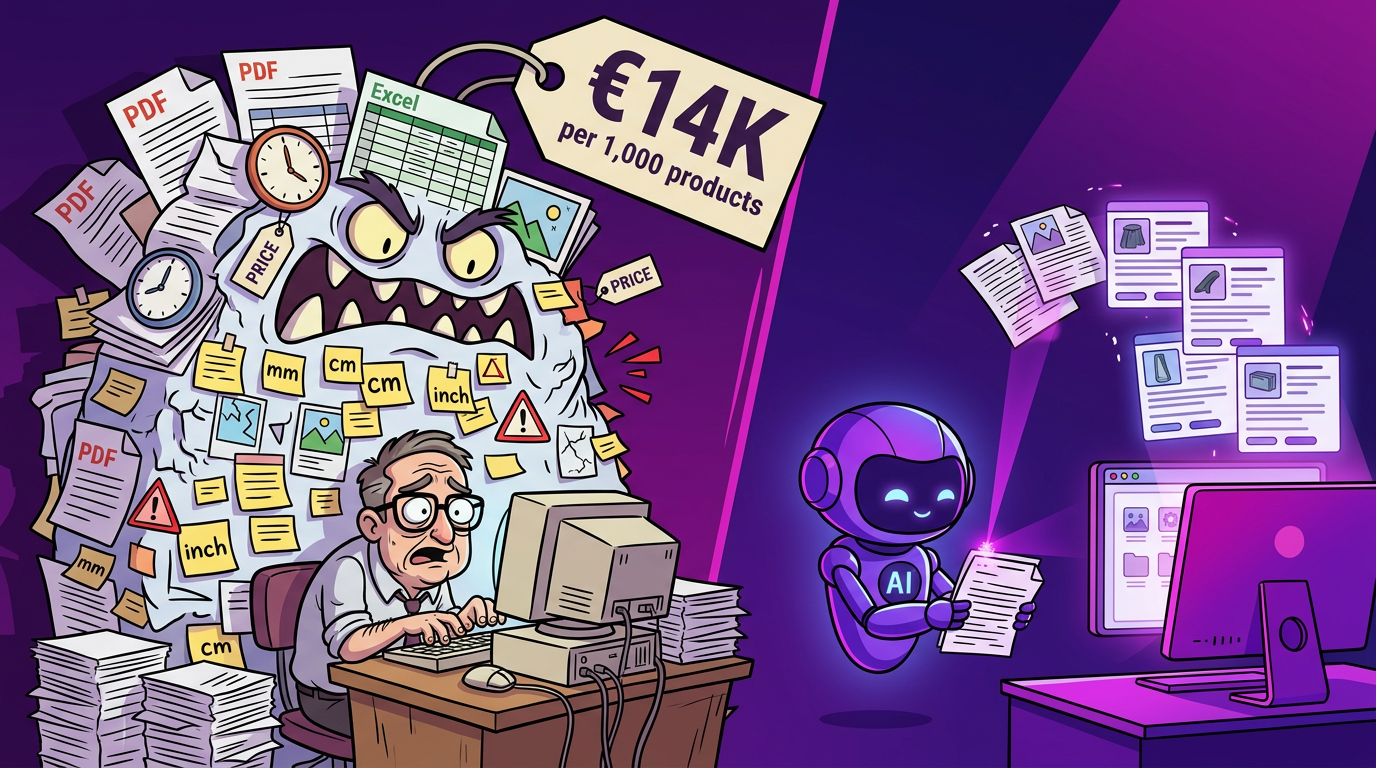

We’ve calculated across our implementation base that manual product data preparation costs approximately EUR 14,000 per 1,000 products. That number includes data collection, normalization, validation, and enrichment. But here’s the thing - that cost assumes you’re preparing data for human consumption.

Preparing data for machine consumption is actually cheaper per unit if you do it right, because AI-assisted tools can handle the normalization and structuring at scale. The Moxo data onboarding study reports 70-80% reduction in processing time when using orchestrated workflows with AI validation. Flatfile reports similar numbers for their AI transformation agents.

But the cost of NOT preparing data is astronomical. If your products are invisible to AI agents because your data fails the five metrics above, you’re not just losing efficiency. You’re losing revenue that never shows up in your analytics because the transaction never happened.

Honestly, the CFO conversation has shifted. It used to be: “How much does data cleanup cost?” Now it’s: “How much revenue are we losing because AI agents can’t parse our catalog?”

The Forrester Wave report frames it as “data quality solutions now sit at the forefront of enterprise success in the AI adoption race.” That’s not a nice-to-have. That’s existential.

How to move from completeness dashboards to AI readiness scoring

The practical path from where most PIM teams are today (measuring completeness) to where they need to be (measuring AI readiness) involves three operational changes.

First, redefine your data quality scorecard. Replace or supplement your completeness percentage with the five metrics above. This doesn’t require new tools initially - you can calculate semantic accuracy and taxonomy adherence from a spreadsheet export. The point is changing what you measure, which changes what your team optimizes for.

Second, automate validation at ingest. The biggest operational win is catching data quality issues when supplier data enters your system, not during a quarterly audit. OpenProd.io does this by running AI-powered validation during the onboarding process - flagging semantic issues, inconsistent formats, and taxonomy violations before data reaches your PIM. The cost difference between fixing a field at ingest vs. fixing it after it’s propagated across 12 channels is roughly 10x.

Third, establish machine-readability as a gate. No product goes live in an agentic commerce channel until it passes a minimum threshold on all five metrics. This is the equivalent of what Akeneo’s SDM does with its 70% confidence gate on mapping - but applied to the data itself, not just the mapping step.

You can calculate your current AI readiness score right now using the OpenProd.io PIM ROI Calculator and compare the cost of remediation against the revenue exposure from agentic channels.

The organizations that will win in 2026 are not the ones with the greenest PIM dashboards. They’re the ones whose product data passes the only test that matters: can an AI agent consume it, trust it, and act on it?

If you’re unsure where your catalog stands, book a demo and we’ll run a sample through the five-metric framework against your actual product data. Most teams are surprised by the gap between their completeness score and their AI readiness score.

That’s not a question completeness percentages can answer. And waiting for the next quarterly data review to find out is a luxury the agentic era doesn’t offer.

Sources and Further Reading

- The Forrester Wave: Data Quality Solutions, Q1 2026 - Forrester’s evaluation of data quality platforms, highlighting the shift to AI readiness

- What’s new in the 2026 Gartner Magic Quadrant for Augmented Data Quality Solutions - Ataccama’s analysis of key scope changes in Gartner’s 2026 evaluation

- What’s new in Akeneo Supplier Data Manager in 2026 - Akeneo SDM changelog including AI-powered mapping with confidence scoring

- Product Data KPIs Every Commerce Leader Should Track - Inriver’s framework for product data performance metrics

- Best Tools for Automating Customer Data Onboarding - Moxo’s study on 70-80% processing time reduction with AI-orchestrated onboarding

- OpenProd.io Features - AI-native product data onboarding for PIM systems