Akeneo shipped one. Pimcore added experimental support. Struct PIM put it on the 2026 roadmap. MCP servers for product data are no longer a differentiator — they’re table stakes. But here’s what nobody in the PIM industry is saying out loud: a vendor-locked MCP server doesn’t solve the problem that actually costs you money.

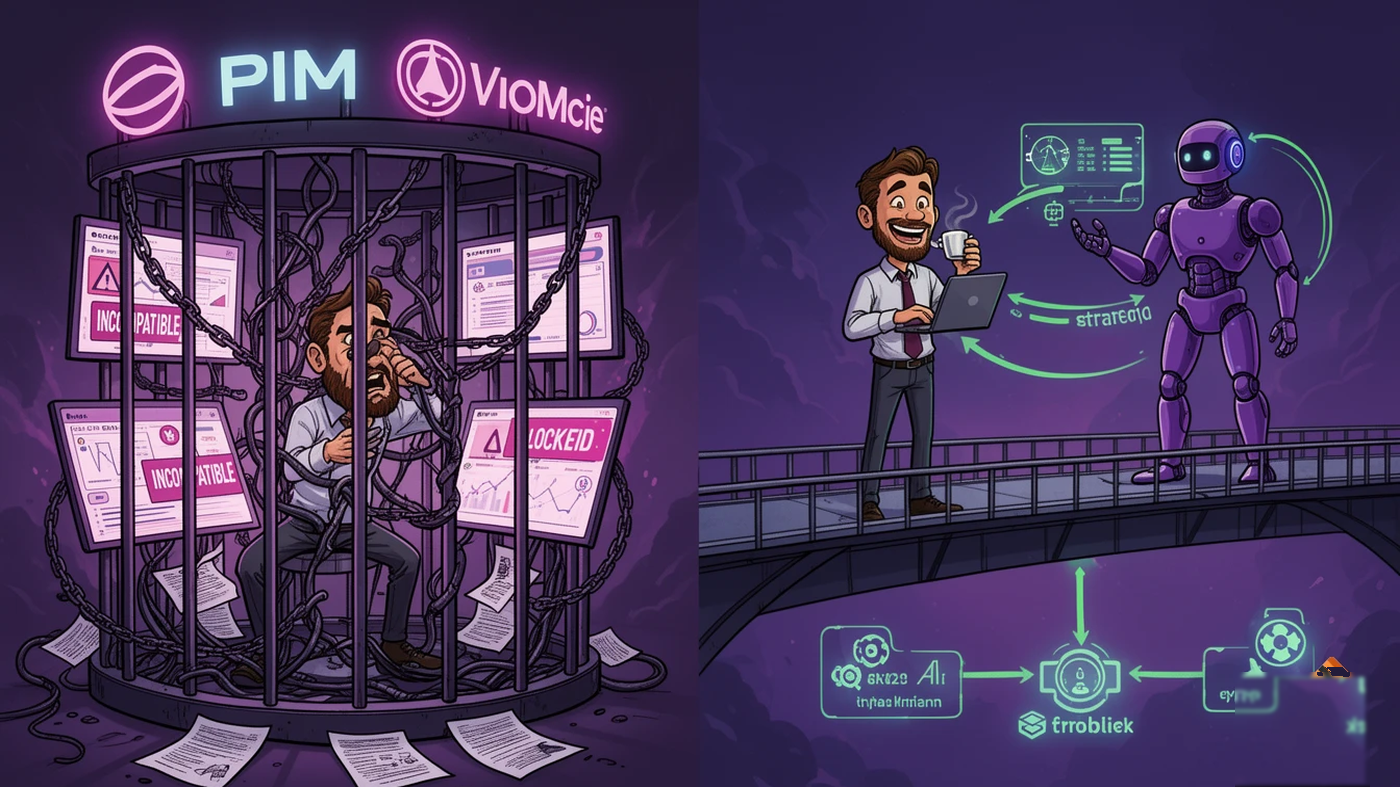

I’ve watched this pattern before. When headless architecture became the PIM buzzword, every vendor slapped “headless” on their marketing page. When AI copilots started trending, every PIM got a chatbot. Now it’s MCP servers. And every single time, the underlying operational pain stays exactly the same: getting messy supplier data into your PIM still takes weeks, costs a fortune, and breaks every time a new supplier sends a catalog in a different format.

Let’s break down what’s actually happening, what it means for your stack, and where the real competitive advantage lies — because it’s not where the vendors are pointing you.

What MCP Servers Actually Do for Product Data

The Model Context Protocol is an open standard from Anthropic that lets AI agents connect to external data sources through a standardized interface. Think USB-C for AI: one protocol, any tool, any model. Instead of building custom integrations for every AI application, you build one MCP server and every MCP-compatible agent can query your data.

For product data, this is genuinely important. When Gartner predicts 90% of B2B purchases will be AI-agent intermediated by 2028, those agents need structured, real-time access to product catalogs. Not scraped HTML. Not batch-exported CSVs from last Tuesday. Live, authoritative data through a standardized protocol.

Here’s what each PIM vendor has announced:

| Vendor | MCP Status | What It Does | Limitation |

|---|---|---|---|

| Akeneo | Production (Winter Release 2026) | Governed access to Akeneo product data for AI agents; Stripe Agentic Commerce partnership | Only works with Akeneo data. Requires Akeneo ecosystem |

| Pimcore | Experimental (v2025.4) | AI agents can read Pimcore data via Datahub Simple-Rest | Read-only, experimental. Pimcore data only |

| Struct PIM | Planned (2026 roadmap) | Conversational access to product data, controlled updates | Not shipped yet. Struct PIM 4 only |

| OpenProd.io | Production | PIM-agnostic: works with any PIM. Handles onboarding + transformation, not just read access | Focused on data onboarding, not full PIM |

The pattern is clear. Every vendor is building an MCP server that exposes their own data to AI agents. That’s useful. But it misses the harder, more expensive problem entirely.

The Problem MCP Servers Don’t Fix

Here’s the question I keep asking CTOs: your PIM’s MCP server lets AI agents read your product data. Great. But who’s responsible for getting that data into your PIM in the first place?

That’s the EUR 14,000-per-1,000-products bottleneck we’ve documented across 70+ implementations. The manual mapping, normalization, validation, and cleanup that turns a supplier’s chaotic Excel file into structured PIM records.

Akeneo’s MCP server doesn’t help when your supplier sends a PDF catalog with 3,000 products in a format your team has never seen. Pimcore’s experimental MCP doesn’t automate the 18 hours of cleanup when attribute names don’t match your data model. Struct PIM’s roadmap item won’t solve the three operational bottlenecks that keep onboarding teams trapped in spreadsheet purgatory.

These vendor MCP servers are solving the last mile — making clean data available to AI agents. But they completely ignore the first mile — transforming raw supplier chaos into clean data. And the first mile is where 95% of the cost and time lives.

Across our 70+ implementations, data onboarding consistently accounts for 60-80% of total PIM project cost. Not configuration. Not customization. Just getting the data in — clean, mapped, validated, and ready for use. Every MCP server in the world won’t help if the data behind it is incomplete, inconsistent, or months out of date.

It’s like building a beautiful highway exit ramp while ignoring that the on-ramp is a dirt road with potholes. Traffic looks great once it’s on the highway. The problem is getting there.

Vendor-Locked vs. PIM-Agnostic: Why Architecture Matters

Here’s where competitive analysis gets interesting. Every PIM vendor’s MCP server creates a deeper dependency on their ecosystem. Akeneo’s MCP works with Akeneo. Pimcore’s works with Pimcore. Struct’s will work with Struct PIM 4.

That might seem obvious, but consider the implications:

If you run multiple PIMs (and many enterprises do — one for B2B, one for B2C, one inherited from an acquisition), you need multiple MCP servers, multiple integration points, and multiple governance models. There’s no unified layer.

If you’re evaluating PIM switches, your MCP-dependent AI workflows break the moment you migrate. The vendor lock-in that MCP was designed to eliminate gets recreated at the PIM layer.

If your AI strategy evolves faster than your PIM (which it will — over 40% of agentic AI projects get canceled by 2027 as strategies shift), you need an MCP layer that isn’t welded to one vendor’s architecture.

This is exactly why we built OpenProd as PIM-agnostic. The MCP server connects to Pimcore, Akeneo, Ergonode — or all three simultaneously. The AI doesn’t care which PIM holds the data. It cares about getting structured, validated product information through a standardized protocol. And critically, it handles the transformation layer that turns raw supplier data into PIM-ready records before it hits your PIM.

The difference between a vendor MCP and a PIM-agnostic MCP is the difference between a proprietary charging cable and a universal adapter. One locks you in. The other lets you choose.

What “Agent Washing” Means for PIM Buyers

Gartner has already coined the term “agent washing” — vendors rebranding existing features as agentic AI without delivering genuine autonomous capabilities. Only about 130 vendors worldwide offer real agentic AI products, according to Gartner’s analysis.

In the PIM world, I’m seeing the same pattern. Let me translate what the vendor announcements actually mean versus what they imply:

| What They Say | What It Actually Means |

|---|---|

| ”Native MCP Server” | AI can read product data from our PIM via a standardized protocol |

| ”AI-powered governance layer” | Access controls on what AI can see and do |

| ”Agentic commerce ready” | Your data can be queried by shopping agents (if it’s already clean) |

| “AI-driven enrichment” | LLM generates product descriptions from your existing attributes |

None of these solve data ingestion. None automate the supplier onboarding process. None give you pre-run cost estimates so your CFO knows exactly what a data onboarding project will cost before you commit budget.

When evaluating MCP capabilities, ask your vendor three questions:

- Does your MCP server handle data coming IN, or only data going OUT? If it’s read-only (like Pimcore’s experimental version), it’s half the equation.

- Does it work with other PIMs, or only yours? If it’s vendor-locked, you’re trading one integration headache for another.

- Can it estimate costs before processing? If you can’t predict the cost of onboarding 5,000 products before running the job, you don’t have enterprise-grade automation — you have an experiment.

The Stack That Actually Works in 2026

The winning architecture isn’t choosing between PIM vendors’ MCP servers. It’s layering the right capabilities:

Layer 1: Your PIM (Pimcore, Akeneo, Ergonode — whichever fits your requirements). This is your single source of truth for structured product data. Keep it.

Layer 2: AI-powered data onboarding (OpenProd). This sits between your suppliers and your PIM. It transforms messy, multi-format supplier data into clean, validated, PIM-ready records. It handles the first mile — the part that costs EUR 14K per 1,000 products when done manually and delivers 95% time savings when automated.

Layer 3: MCP for external consumers. Your PIM’s native MCP server (or OpenProd’s PIM-agnostic one) exposes clean data to AI agents, agentic commerce platforms, and Digital Product Passport systems.

Most vendors want you to believe Layers 1 and 3 are enough. They’re not. Without Layer 2, your MCP server is a beautiful window into a half-empty room. AI agents will query your product data and find gaps, inconsistencies, and missing attributes — because the data never got properly onboarded in the first place.

The enterprises that get this right will build their CFO-ready business case around the full stack, not just the shiny MCP endpoint. The payback period drops from 12+ months to under 6 when you factor in the onboarding automation that makes the MCP layer actually useful.

Are you building the highway and the on-ramp? Or just the exit?

Sources and Further Reading

- Akeneo Winter Release 2026: Executing at the Speed of AI

- Akeneo Accelerates Commerce Velocity as Agentic AI Transforms Digital Engagement

- Pimcore Platform Version 2025.4 — Built for the Future

- Struct PIM Roadmap 2026

- Commercetools: 7 AI Trends Shaping Agentic Commerce in 2026

- Informatica: Understanding Model Context Protocol’s Role in AI

- Forbes: Agentic AI Takes Over — 11 Shocking 2026 Predictions

- CIO: Why Most Agentic AI Projects Stall Before They Scale

- Stamus Networks: How MCP and Open Standards End Vendor Lock-in